How eDoctrina Automates K–12 Grading Across 1,000+ Districts

In partnership with Itera Research, eDoctrina automated test checking inside a single workflow that connects creation, grading, and reporting for schools across the U.S.

Problem

As eDoctrina expanded across U.S. schools, assessment checking placed increasing pressure on daily operations. Teachers were responsible for grading large volumes of student work, entering results into systems, and validating accuracy before reports could be shared. Administrators faced growing expectations for timely, reliable assessment data while working within fixed staffing and reporting cycles.

The challenge extended beyond volume into repetition. Assessment steps were spread across different tools, which required the same information to be handled multiple times. Tests were created in one place, graded in another, and reported elsewhere. Each transition added delay and increased the likelihood of errors. As usage grew, this approach demanded more effort without improving clarity.

Paper-based exams added complexity to an already demanding process. Many schools relied on paper for practical, instructional, or policy reasons, which introduced scanning and transcription work into the assessment cycle. Generic automation tools failed to fit classroom conditions or integrate with reporting workflows, leaving schools with fragmented processes that consumed time without reducing workload.

Build

When Itera Research worked with eDoctrina on automated test checking, the objective was to connect assessment steps into a single, dependable workflow. Test creation, scanning, grading, and reporting needed to operate as one continuous process rather than as separate tasks.

The system was designed to support both paper and digital assessments within the same flow.

Teachers created tests aligned with curriculum and standards, distributed them in formats that matched classroom needs, and collected completed work without changing established routines. Paper exams were scanned using existing networked copiers, while digital submissions followed the same internal processing path.

Automation focused on repetitive assessment work. Grading logic, answer recognition, and score aggregation were handled by the system, while teachers retained review and oversight where professional judgment was required.

Results flowed directly into dashboards and reports, which removed manual re-entry and reduced reconciliation steps across teams.

Development prioritized operational stability. Schools work on fixed academic calendars, and assessment workflows cannot pause for experimentation.

Automated test checking was introduced gradually and refined through live use, allowing scale to increase without disrupting instruction.

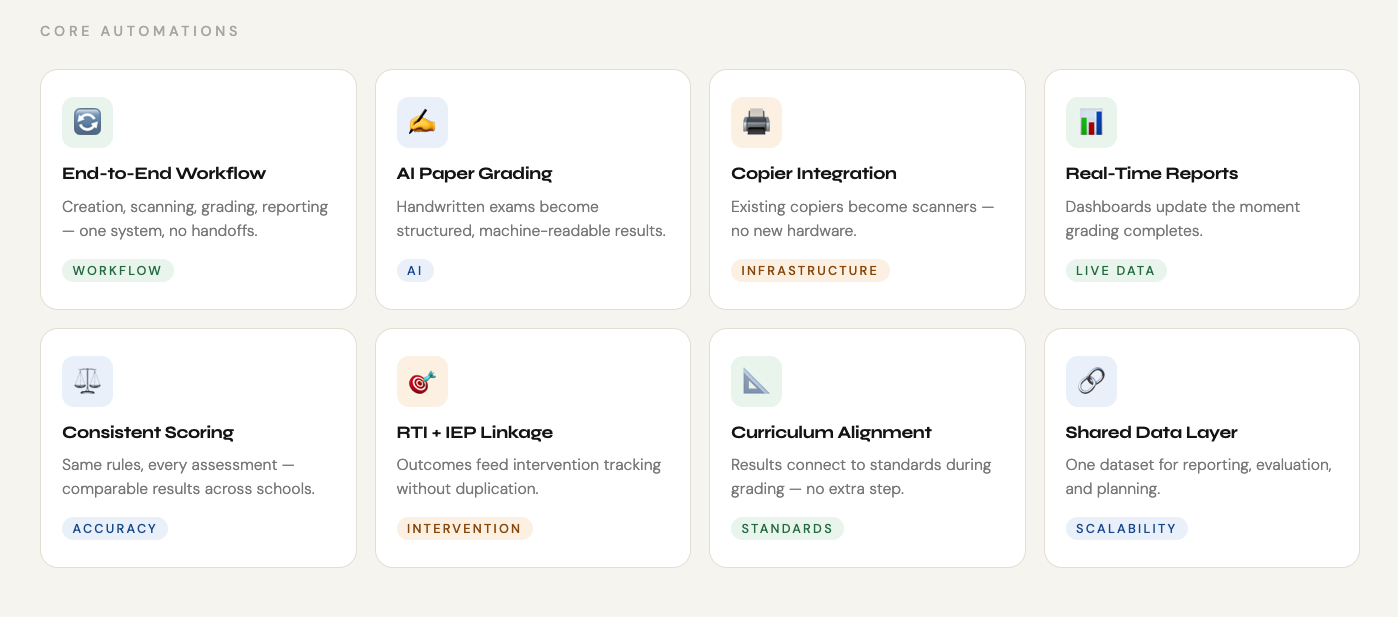

High-Impact Automations for Classroom

- End-to-end assessment workflow

Test creation, scanning, grading, and reporting operate inside one system, which removes handoffs between tools and eliminates manual uploads or re-entry steps that previously slowed assessment cycles.

- AI-supported grading for paper-based exams

Handwritten and mixed-format assessments are processed through integrated recognition and grading logic, allowing schools to keep paper exams while still receiving structured, machine-readable results.

- Networked copier integration

Existing school copiers function as scanners within the assessment flow, which avoids additional hardware purchases and keeps scanning aligned with classroom routines.

- Real-time data flow into reporting

Graded results move directly into the reporting layer, where dashboards update as soon as assessments are processed, supporting timely visibility at classroom, school, and district levels.

- Consistent scoring and validation logic

Automated grading applies the same rules across assessments, which reduces variation introduced by manual checking and supports comparability across classes and schools.

- Direct linkage to RTI and student tracking

Assessment outcomes feed into RTI, IEP, and behavior tracking without duplication, allowing intervention planning to rely on the same data used for grading and reporting.

- Curriculum-aligned assessment results

Test outcomes connect back to curriculum standards and planning tools, which allows districts to review instructional alignment using assessment data already produced during grading.

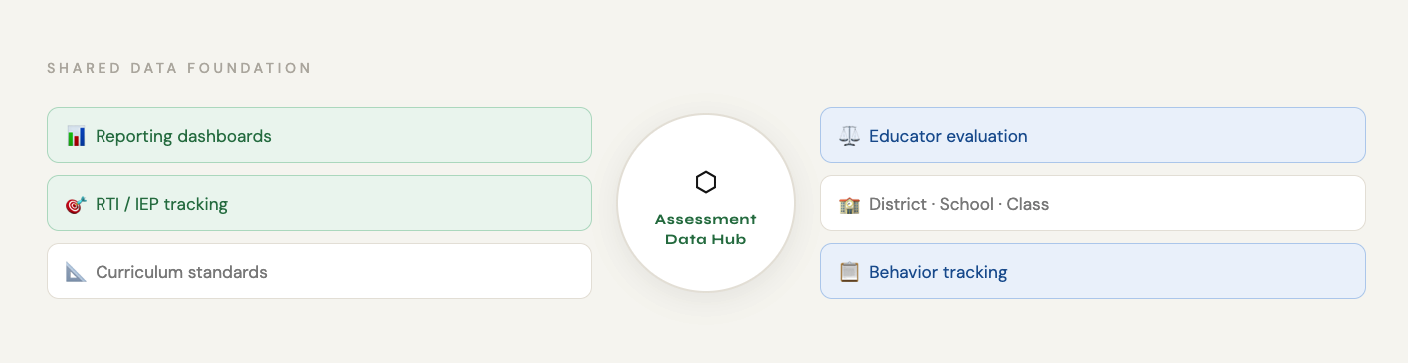

- Shared data foundation across workflows The same assessment data supports reporting, curriculum planning, and educator evaluation, which reduces parallel data maintenance and keeps operational overhead predictable as scale increases.

Tools & Technologies

|

|

Outcome

As automated test checking became part of everyday use, its effect showed up first in pace and predictability. Grading cycles shortened because tests moved through creation, scanning, grading, and reporting without pauses for manual handling. Teachers were able to return results while the material was still fresh for students, instead of waiting for data entry and verification to catch up.

Consistency improved across classrooms and schools. Automated scoring applied the same logic every time, which reduced variation caused by fatigue or interpretation differences during manual checking. Administrators received structured results earlier in the reporting cycle, which simplified district-level reviews and reduced follow-up requests to schools.

At scale, the operational impact mattered most. eDoctrina now supports over one million users and is used across more than 20 states, over 1,000 districts, and more than 5,000 schools. Automated test checking allowed this growth without expanding administrative workload, since assessment volume increased while staffing and manual effort remained stable.

If you are building an education platform where AI needs to carry real operational weight over time, we should talk. Itera Research works with teams that design AI as part of the system itself, shaped by everyday constraints and long-term use, so growth stays manageable and trust compounds with each school year.

Explore Our Case Studies

From 95% fraud reduction to AI-powered logistics. See how we turn complex technical challenges into measurable business growth.